Table of Contents

- Overview

- Introduction

- System Reliability and Data Availability

- Objectives and Scope

- Requirements

- Task 1 – Migrate Partitions from a 120 GB SSD to a 500 GB SSD Using GParted

- Step 1 – Check Data Integrity with File System Consistency Check

- Step 2 – Back Up /home Data Files and Directories

- Step 3 – Prepare the New SSD

- Step 4 – Reboot and Remove Live USB

- Step 5 – Test the Operational Functioning of the New SSD

- Step 6 – Replace the native SSD

- Step 7 – Verify Boot and Partition Mounting

- Task 2 – Prepare the SSD Replica

- Step 1 – Clone SSD replica from primary SSD

- Step 2 – Test SSD replica as Bootable Clone

- Task 3 – Backup and Clone Strategies

- Backup

- Clone

- Example Commands for Clone and Rsync Synchronization

- Task 4 – Improvement and Automation After Migration

- Automate Backup and Synchronization Tasks

- Implement Health Monitoring Checks

- Maintain Disk and Partition Consistency

- Optimize System Performance

- Conclusions

Overview

This tutorial is intended for Ubuntu users (from hobbyists to developers or sysadmins) who wish to upgrade their existing OS installation from an older or smaller SSD to a larger, faster one — without losing data and without reinstalling everything from scratch.

It also targets users who want to implement a simple but effective disaster-recovery strategy: by creating a bootable clone and a regular replication of your home/data directories, you can safeguard against SSD failure, data corruption or accidental data loss.

By following this guide you’ll solve the dual challenge of storage upgrade + data protection: migrate your OS safely, reclaim space and performance, and build a fallback mechanism that minimizes downtime and loss.

All tools used here are open-source, integrated into Linux/Ubuntu, and chosen for their stability, intuitive usage, and perfect fit for SSD migration and disaster recovery. This includes GParted, rsync, smartmontools, and Ubuntu’s native bootloader utilities—ensuring the entire process remains transparent, efficient and free.

By completing this tutorial, you will both upgrade your SSD and establish a robust recovery mechanism that protects your system against data loss and downtime.

Introduction

In today’s environments, ensuring system reliability and data availability is essential for both operational efficiency and disaster recovery.

This guide provides a comprehensive walkthrough on how to replace an old SSD (Solid-State Drive) with a new, higher-performance one, migrate an Ubuntu OS installation, and implement asynchronous physical replication on a secondary SSD to safeguard data in case of hardware or system failure.

By combining these two essential tasks—hardware upgrade and data replication—it is possible to significantly improve system performance, enhance disaster recovery strategies, and minimize downtime and data loss in critical scenarios.

The process is outlined step-by-step, starting from the initial backup and migration of the Ubuntu OS, through to setting up asynchronous replication for continuous data protection.

Objectives and Scope:

1 – Migrate the Ubuntu operating system and all associated partitions from the original SSD to a new SSD.

2 – Create a secondary bootable SSD replica to enable reliable disaster recovery.

3 – Implement separate strategies for system cloning and data backup.

4 – Plan and establish ongoing monitoring of SSD health, data consistency, and performance.

Requirements

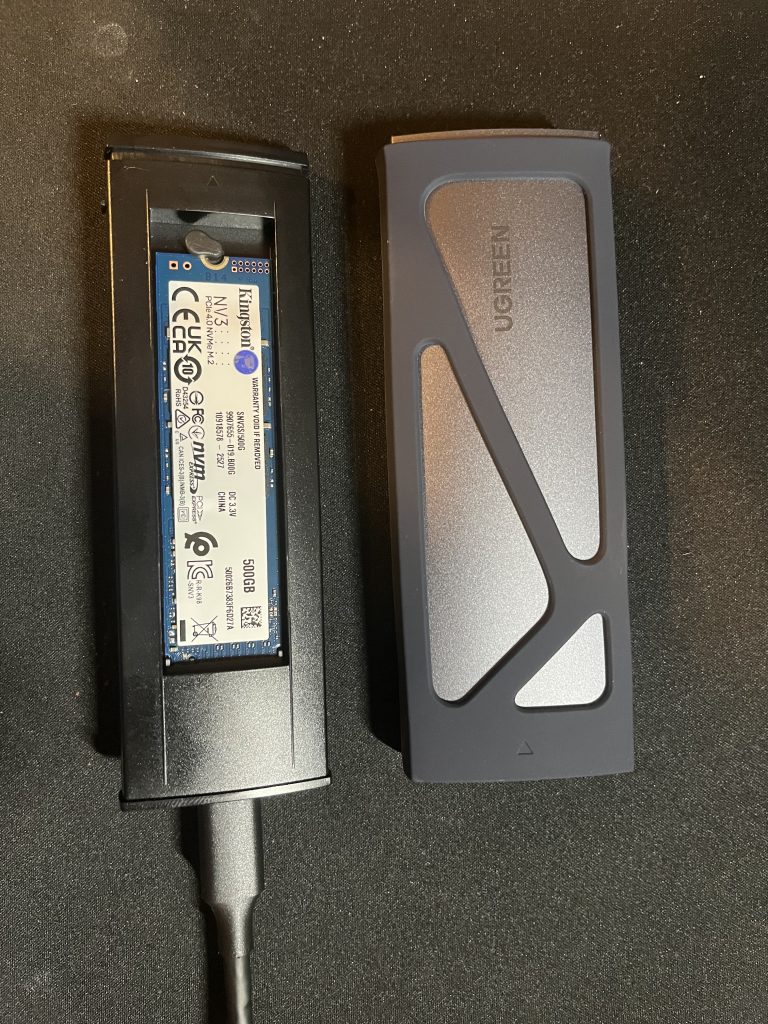

- 1 x Live Ubuntu 25.04 USB flash drive

- 2 x Kingston NV3 NVMe PCIe 4.0 Interne SSD 500GB M.2 2280-SNV3S/500G

- 1 x UGREEN SSD Enclosure, Tool-Free USB C External, 10Gbps M.2 NVMe to USB Adapter/Reader Supports M and B&M Keys and Size 2230/2242 /2260/2280 SSDs

- Ubuntu 25.04 OS installed on a native 128 GB SSD

- Ubuntu user (tor) with administrator privileges (sudo)

Task 1: Migrate Partitions from a 128 GB SSD to a 500 GB SSD Using GParted

The first goal is to migrate the existing Ubuntu OS installation from the native 128 GB SSD to a new 500 GB SSD.

This upgrade provides additional storage capacity while ensuring the system continues to operate smoothly without any data loss.

To operate with unmounted partitions, we need to boot the system from a Live Ubuntu USB, (select the USB flash drive after enter in the *BIOS boot order menu.) and use GParted, a powerful graphical partition editor, as the main tool for this task.

*Restart your computer and repeatedly press the specific boot menu key. It depends on your computer manufacturer and model, so check your device’s manual.

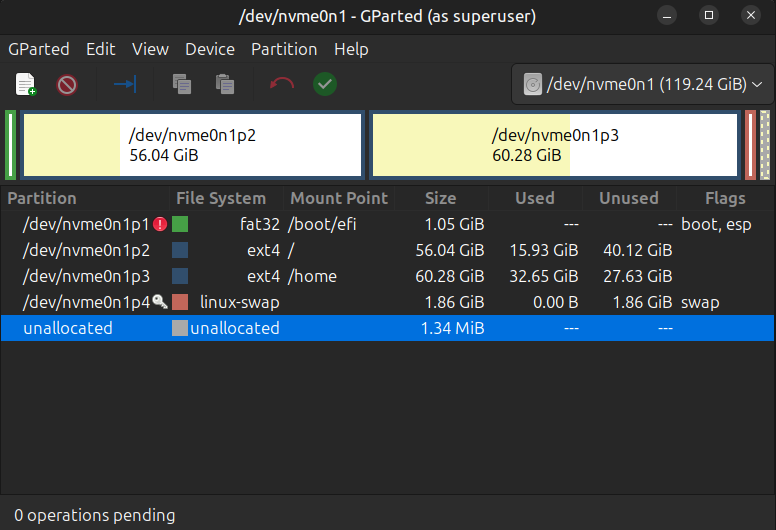

The native SSD is connected through an NVME port and uses the nvme driver on Linux, for this reason we can see it as: nvme0n1

Open GParted and select the native SSD first.

128 GB SSD Partitions:

- Boot

- Root filesystem (/)

- Personal data (/home)*

- Swap

*I took the decision to Separate the root (/) and home (/home) partitions during the first Ubuntu installation because it offers several advantages. If the system becomes corrupted or unbootable, personal data stored on /home remains safe on a separate partition. Even a full OS reinstallation won’t affect personal files and settings. Moreover, this structure provides greater flexibility for backups—As I will show later, you can back up /home content directory independently from system files.

Step 1: Check Data Integrity with “File System Consistency Check”

Close GParted. From the Bash terminal, run the following command to force a full filesystem check and ensure the filesystem is clean before copying and migrate it:

fsck -f /dev/nvme0n1 # Forces a full filesystem check-f forces a check even if the filesystem is marked clean.

If errors are found, fsck will prompt to fix them; use -y to auto-confirm all fixes.

Step 2: Back Up /home Data Files and Directories

Before starting the migration, backup all important data from your native SSD.

It is recommendable to backup on an separate external drive or USB flash drive to ensure you have a secure copy of your essential personal files before proceed with the migration.

Step 3: Prepare the New SSD

- Connect the new SSD via an external USB adapter during the Live USB session.

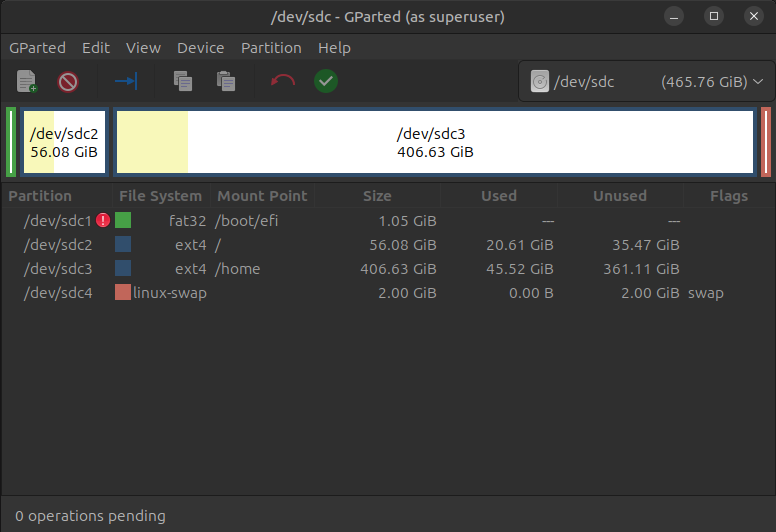

2. Open GParted, search and select the new SSD ( as removable device you should find it with a device name like /dev/sda–b–c–d etc..)

3. The SSD is unformatted out of the box and you will need to format it to make it usable. First we must create a partition table on it. Go to Device –> Create Partition Table —> select new Partition Tablet Type –> GPT

4. Copy/paste sequentially the unmounted partitions 1, 2, and 3 from the native SSD onto the new one:

- Select the native SSD drive: Partition —> Copy.

- After select the new SSD drive: Partition —> Paste.

5. Resize the /home partition (new SSD) to take advantage of the new drive’s storage capacity. Let the last 2 GB unallocated.

6. Next, create manually a swap partition . Partition —> new —> on the “Create new Partition” Windows select Create as: primary partition and File System: Linux-Swap (use the remained 2GB).

500 GB SSD: copied and resized Partitions

7. Apply all operations.

8. Before we mount and test the new SSD drive, we can run a file system consistency check from the bash terminal:

fsck -f /dev/sdc # Forces a full filesystem checkStep 4: Reboot and Remove Live USB

Restart the system and disconnect the Live USB stick.

Step 5: Operational checks on the New SSD

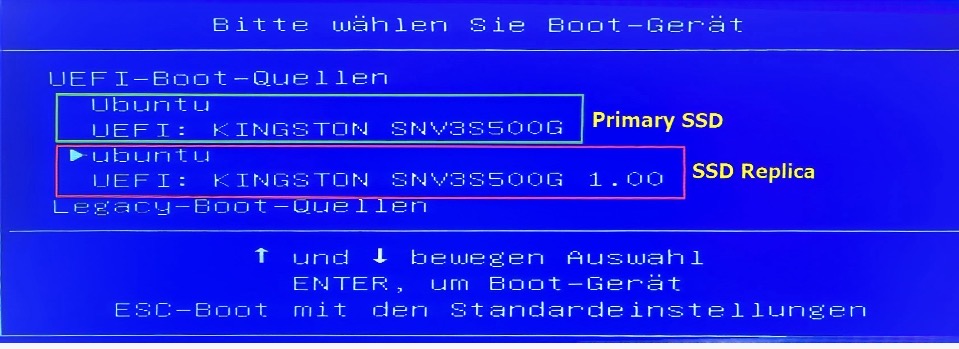

Boot from the new SSD (still connected via the external USB adapter). Select it after enter in the BIOS boot menu.

Basic checks

1. System boot: verify that the system is able to boot from the new SSD. If it does not boot, you may need to reinstall GRUB from the live USB (with grub-install + update-grub):

# Mount the root filesystem of the installed Ubuntu system to /mnt

sudo mount /dev/sdc2 /mnt

# Bind the host system’s /dev directory (device files) into the chroot environment

sudo mount --bind /dev /mnt/dev

# Bind the host system’s /proc virtual filesystem (process information) into chroot

sudo mount --bind /proc /mnt/proc

# Bind the host system’s /sys virtual filesystem (hardware and kernel info) into chroot

sudo mount --bind /sys /mnt/sys

# Change root into the mounted system — you are now "inside" the installed system as if it were booted

sudo chroot /mnt

# Reinstall GRUB bootloader onto the specified disk (not a partition)

grub-install /dev/sdc

# Regenerate GRUB configuration files (detects OS kernels and updates boot menu)

update-grub

# Exit the chroot environment and return to the live system

exit

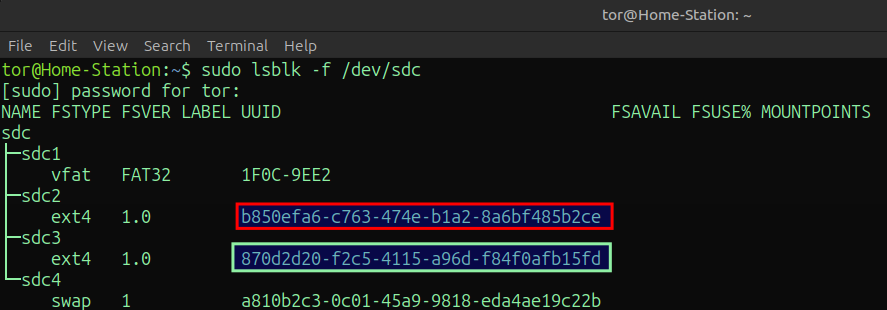

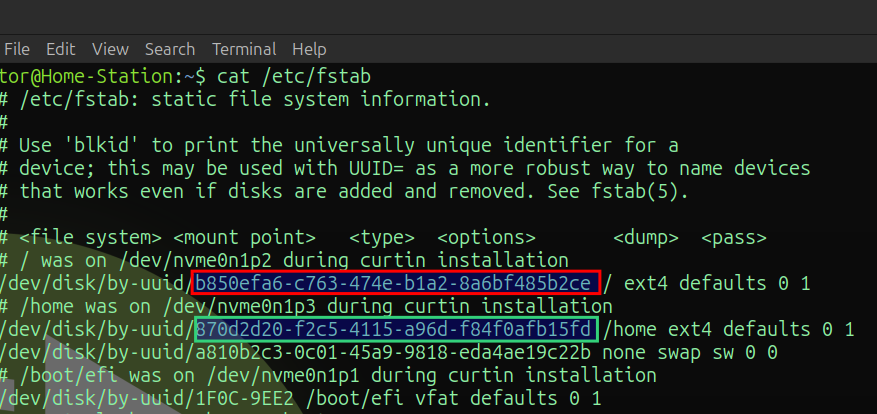

2. Check that the /etc/fstab file points to the correct UUIDs of the new disk. It lists all available filesystems, defining how and where they should be mounted automatically at boot time.

List UUIDs:

sudo lsblk -f /dev/sdc

Print content file /etc/fstab:

cat /etc/fstab

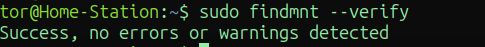

sudo findmnt --verify # check /etc/fstab syntax validity

If the UUIDs do not match, the system may not mount the partitions correctly → correct them with tune2fs . It ensures that each partition has a unique ID, preventing boot or mount conflicts. Reboot and start from the live Ubuntu USB. You need to perform operations on a filesystem that is not mounted.

# Assign a new random UUID (Universally Unique Identifier) to the specified filesystem.

sudo tune2fs -U random /dev/sdc2

sudo tune2fs -U random /dev/sdc3

# List all block devices along with their new UUIDs, filesystem types, and labels.

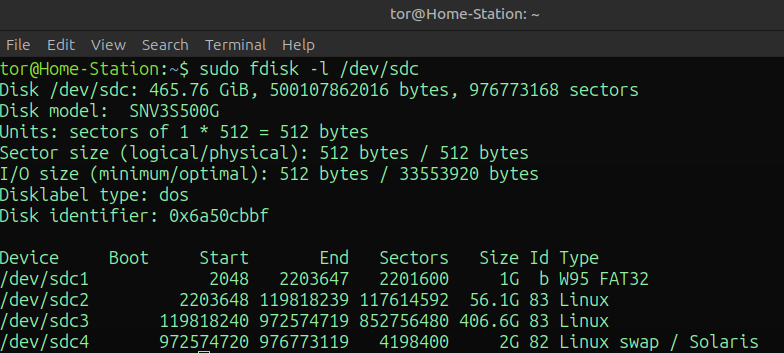

sudo blkid /dev/sdc*3. Partition alignment (important for SSDs)

Use fdisk to check Partition alignment. They should start at a multiple of 2048 sectors.

sudo fdisk -l /dev/sdc # list SSD partitions with start/end Sectors etc...

Step 6: Replace the Old SSD

After the successful checks, you can physically remove the native old SSD and install the new one in the motherboard m.2 slot.

Step 7: Verify Boot and Partition Mounting

Ensure that Ubuntu boots correctly and automatically mounts all partitions from the new primary SSD .

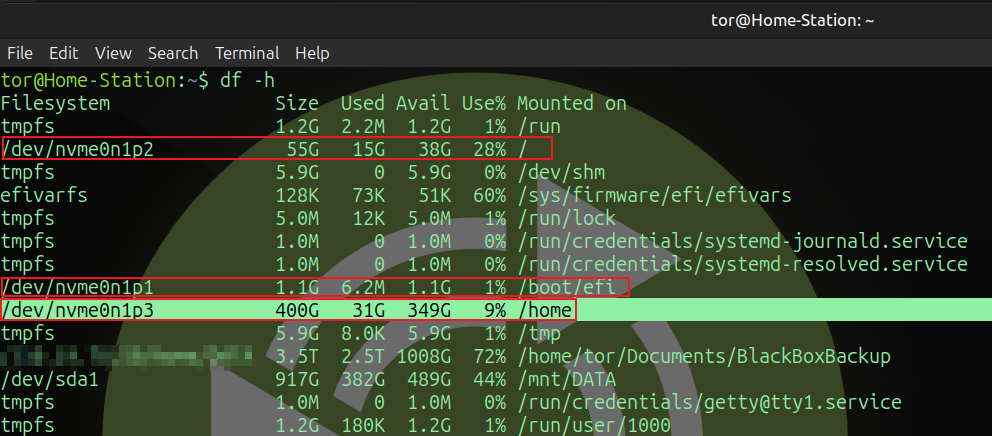

Once we connected the it to the NVME port, it should now appear as nvme0n1. When we launch the diskfree command, the/home partition (nvme0n1p3) will display the expanded storage space.

Task two: prepare the SSD replica

Step 1: Clone SSD replica from the primary SSD

- Boot again from the Live Ubuntu USB.

- Connect the secondary SSD (

replica) via external USB adapter. - In GParted, create a new GPT partition table on SSD replica.

- Copy/paste sequentially all partitions from

primary SSDtoSSD replica. - Once the partitions are created, run a file system consistency check (see above):

Step 2: Test SSD replica as bootable clone

try to boot the OS from SSD replica (via the USB adapter) and verify that the system is able to boot.

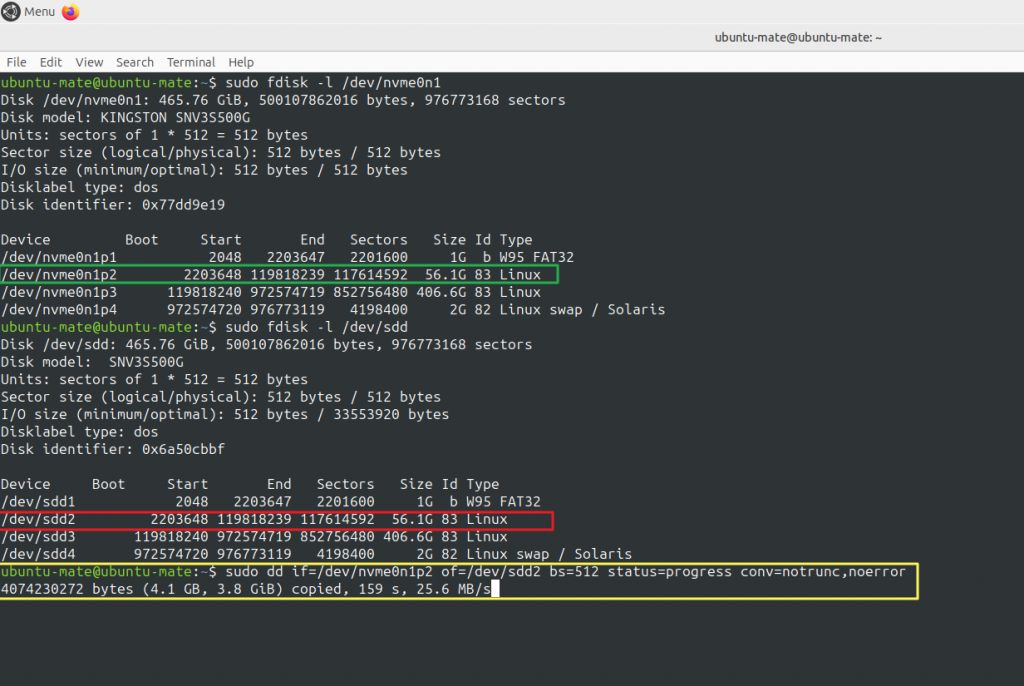

After the login, you can perform additional checks from the bash terminal to compare both SSD:

df -h # show disk available over partitions

fdisk -l # list partitionsPartition size (used/available) and alignment must be identical between primary SSD (nvme0n1) and SSD replica (now as /dev/sdd)

Task 3: Backup and Clone Strategies

Backup and clone strategies are fundamental for maintaining a productive and resilient environment.

Together, they protect data, minimize downtime, ensure operational continuity, and enable safe change management.

Below is a concise overview of their purpose, differences, and best practices.

- Clone:

A complete, often bootable, copy of a system, disk, or virtual machine that mirrors the source exactly at the time of creation. Clones are useful for rapid recovery, testing, deployment, or migration scenarios. - Backup:

A copy of data (files, databases, system states) that can be restored after loss, corruption, or accidental modification. Backups may be full or incremental, and should always be stored in a separate location.

- 1. Weekly System Clone

Once per week, create a full clone of the system using a Live Ubuntu USB and the built-inddtool. (Alternatively, user-friendly tools such as Clonezilla or ddrescue can be used.)

This process performs a low-level, block-by-block copy, duplicating raw data from one device or file to another—independent of the filesystem structure.

Before starting the cloning procedure, verify the hard drive name assigned and any disk is mounted (especially the destination) to ensure the process runs safely and correctly:

sudo fdisk -l # to find which hard drive name is assigned to SSD replica

sudo mount | grep sdd # verify if any partitions or SSD device is mounted

sudo umount /dev/sdd # Unmount if necessary# start the clone of file system partition (p.2)

sudo dd if=/dev/nvme0n1p2 of=/dev/sdd2 bs=512 status=progress conv=notrunc,noerror

- 2. Home Partition Synchronization

Synchronize the contents of the user’s Home partition between the primary SSD and its replica. It would be a waste of time to clone block-by-block a big partition with only around 9 % space used.

Thersyncutility is ideal for this task, providing fast, efficient, and flexible mirroring of directory structures while maintaining exact copies:

First/full Backup: mount the SSD replica Home partition on the /mnt/ssdreplica directory. (Create it once on the primary SSD Ubuntu file system, before executing the first backup).

rsync -aHAXv --progress /home/tor/* /mnt/ssdreplica/tor # run full User's home directory backup A, X, and H flags in order to preserve ACLs, extended attributes, and hard links

Incremental Backup: after the first backup, rsync compares file timestamps and sizes between source and destination. Only modified or new files are transferred.

rsync -aHAXv --delete --progress /home/tor/* /mnt/ssdreplica/tor # run incremental User's home directory backupAfter every backup ensures the replica truly matches the source:

sudo diff -qr /mnt/ssdreplica/tor /home/tor # compare User's home directory content between destination and sourceTask 4: Improvement and automation after migration

Once the system migration and replication setup have been successfully completed, several improvements can be introduced to enhance system reliability, automate maintenance tasks, and reduce human error. These post-migration optimizations ensure that the environment remains consistent, up-to-date, and easy to recover in case of failures.

1.Automate Backup and Synchronization Tasks

Manual execution of backup with rsync commands can be replaced by scheduled jobs.

Use cron (or alternatively bash script) to run regular incremental synchronizations between the primary SSD and the replica. You also have the option to perform a selective backup, targeting specific directories containing valuable data, rather than backing up the entire user’s Home directory.

crontab -e #edit crontabExample using cron for a daily (at 7 pm) Documents directory backup . It will keep a permanent log for later review or troubleshooting.

# Edit this file to introduce tasks to be run by cron

#

# Each task to run has to be defined through a single line

# indicating with different fields when the task will be run

# and what command to run for the task

#

# To define the time you can provide concrete values for

# minute (m), hour (h), day of month (dom), month (mon),

# and day of week (dow) or use '*' in these fields (for 'any').

#

# Notice that tasks will be started based on the cron's system

# daemon's notion of time and timezones.

#

# Output of the crontab jobs (including errors) is sent through

# email to the user the crontab file belongs to (unless redirected).

#

# For example, you can run a backup of all your user accounts

# at 5 a.m every week with:

# 0 5 * * 1 tar -zcf /var/backups/home.tgz /home/

#

# For more information see the manual pages of crontab(5) and cron(8)

#

# m h dom mon dow command

0 19 * * * rsync -aHAXv --delete /home/tor/Documents* /mnt/ssdreplica/ tor/Documents | tee -a /var/log/rsync-backup.logThe tee -a flag stands for append — it will add new output at the end of the file, without removing previous entries.

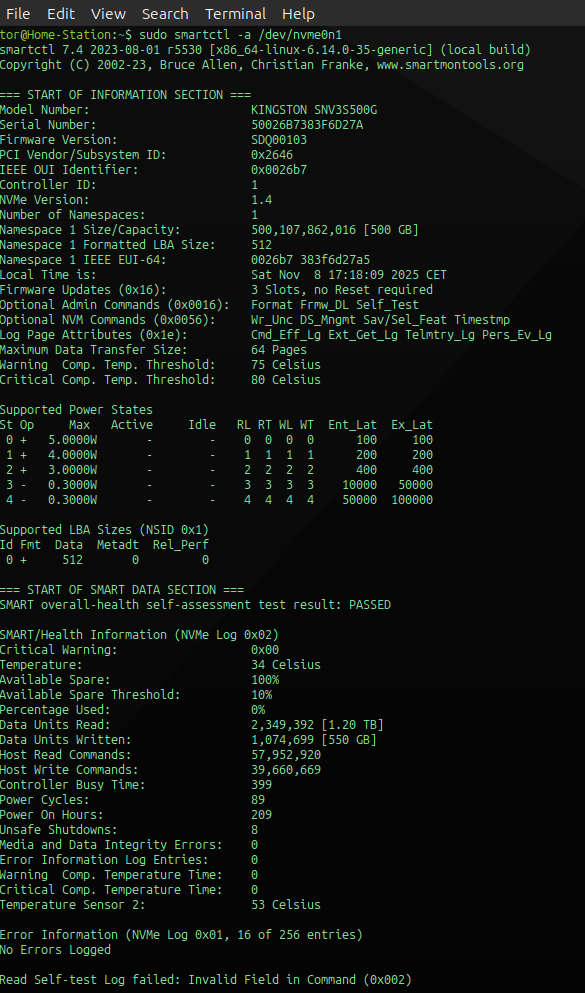

2.Implement Health monitoring Checks

Regularly monitor both SSDs disk health using SMART diagnostics and filesystem checks to detect potential issues early:

sudo apt-get update # refresh the repositories with the last updates

sudo apt-get install smartmontools #install smartmontools package

sudo smartctl -a /dev/nvme0n1 #collects and reports drive-internal health statistics

sudo smartctl -H -A /dev/nvme0n1 # concise health summary

SMART Monitoring available Summary for SSDs:

| Category | Description / What It Checks | Typical SMART Attributes |

|---|---|---|

| Health Status | Overall condition reported by the SSD’s firmware. A “PASSED” result means the drive is operating normally; “FAILED” indicates predicted failure. | SMART overall-health self-assessment |

| Lifetime Statistics | Shows how long the SSD has been in use and how often it’s been powered on or off. | Power_On_Hours, Power_Cycle_Count, Unsafe_Shutdowns |

| Data Integrity | Detects uncorrectable read/write errors or damaged sectors. Useful for spotting early signs of failure. | Media and Data Integrity Errors, CRC_Error_Count, Uncorrectable_Errors |

| Wear-Leveling & Endurance | Monitors NAND flash wear and remaining life expectancy. Indicates how much write endurance has been consumed. | Percentage_Used, Wear_Leveling_Count, Total_LBAs_Written, Data_Units_Written |

| Temperature & Thermal Events | Tracks current temperature, historical highs, and thermal throttling events. | Temperature, Thermal_Throttle_Status, Temperature_Sensor_* |

| Self-Tests | Built-in diagnostic tests that verify the SSD controller and NAND health (can be run manually). | smartctl -t short, smartctl -t long results |

3.Maintain Disk and Partition Consistency

After several synchronization cycles, it is advisable to verify that partition alignment, UUIDs, and mount points remain correct on SSD replica.

sudo fsck -nf /dev/sdd # check file consistency

sudo lsblk -f # list UUIDs

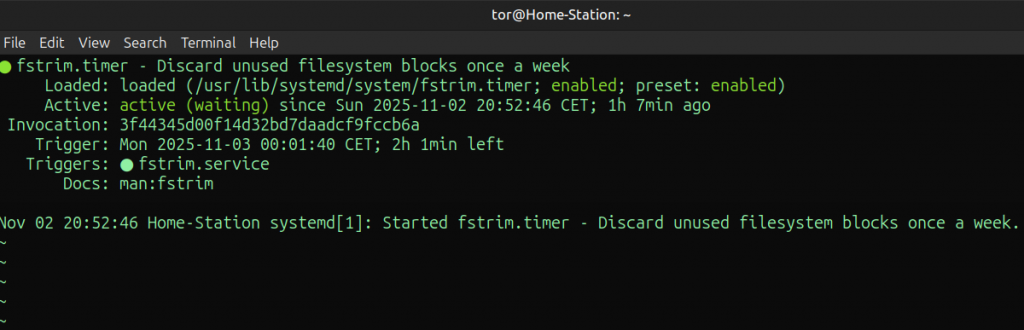

cat /etc/fstab # print fstab file content4.Optimize System Performance

Adjust I/O scheduler and TRIM settings to ensure optimal SSD performance and regular maintenance of free space, preventing performance degradation over time:

sudo systemctl enable fstrim.timer #enable the automatic weekly service provided by Ubuntu

sudo systemctl start fstrim.timer #start the automatic weekly service provided by Ubuntu

sudo systemctl status fstrim.timer #check the automatic weekly service provided by UbuntuFSTRIM process running on Ubuntu:

I/O Scheduler is responsible for how are handled read and write requests to a disk. The NVMe SSDs are able to handle thousands of concurrent I/O requests in parallel, using internal queues. So, they don’t need complex scheduling at the kernel level:

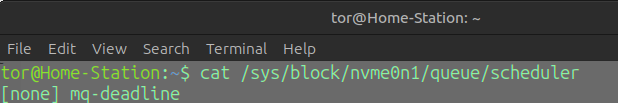

cat /sys/block/nvme0n1/queue/scheduler #Check the Current Scheduler

output:

The one in brackets [ ] is the active scheduler. (none: in my case is not active).

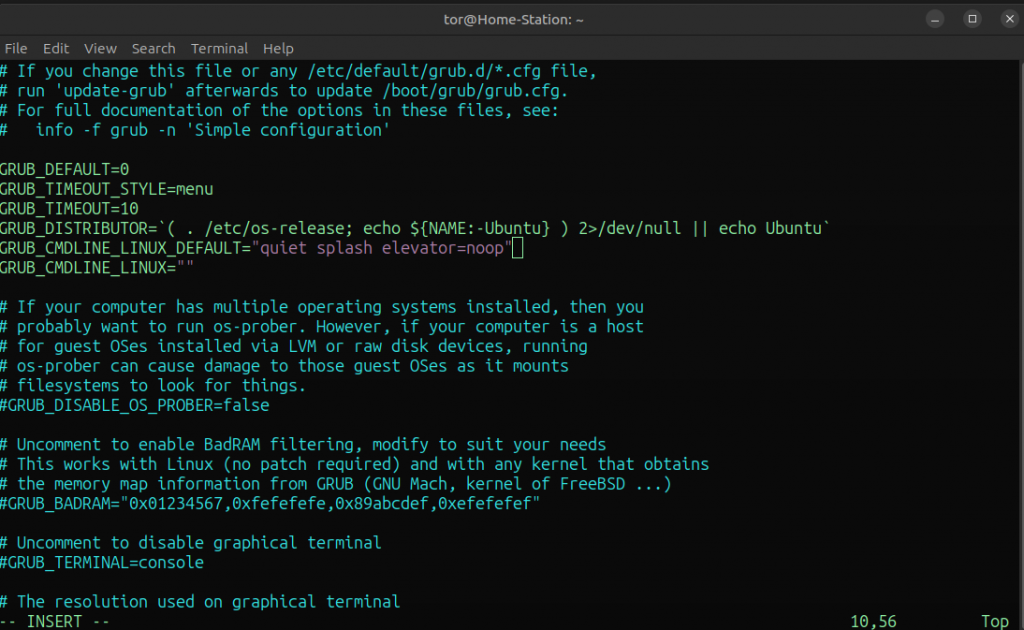

Otherwise, to disable permanent the scheduler edit the GRUB configuration file:

sudo vim /etc/default/gruband append your scheduler choice:

GRUB_CMDLINE_LINUX_DEFAULT="quiet splash elevator=noop"

save the changes and update the GRUB:

sudo update-grub # GRUB update

Conclusions

This procedure successfully demonstrates how to upgrade an existing Ubuntu installation to a larger and faster SSD while maintaining data integrity and ensuring operational continuity through replication.

By following the outlined steps—beginning with filesystem validation, secure data backup, and precise partition migration—the system upgrade can be executed safely and efficiently. The verification of UUIDs, GRUB configuration, and partition alignment ensures that the new drive operates reliably as the primary system disk.

In parallel, setting up a secondary SSD replica provides an effective safeguard against data loss and hardware failure. The combination of full cloning (via dd) and incremental synchronization (via rsync) establishes a flexible, asynchronous replication strategy that maintains an up-to-date mirror of the main system with minimal performance impact. Remember that using a backup is different from performing Disaster Recovery. DR restores operational services, whereas backups enable point-in-time restorations of data to recover from loss, file corruption or accidental

deletion.

The SSD replica is also an essential tool whenever I upgrade Ubuntu to a newer release. With this setup, I can carefully monitor the system over time to ensure full compatibility with the updated version. If any conflicts arise with installed applications or drivers, I can simply boot from the Ubuntu installation on the SSD replica, allowing me to continue operate without interruptions.

Beyond the basic migration and replication, the post-migration improvements introduced in Task 4 add an additional layer of reliability and automation that transforms this setup into a long-term, self-maintaining environment:

- Automated Synchronization and Backups

By scheduling regular rsync jobs viacron, the backup process becomes completely autonomous. This eliminates manual intervention, ensures data freshness, and provides predictable, consistent replication cycles. - Health Monitoring and Predictive Maintenance

Continuous SMART monitoring withsmartctland periodicfsckscans make it possible to detect early signs of disk wear, bad sectors, or controller degradation—allowing preventive maintenance before a critical failure occurs. - Consistency Verification

Regular validation of partition UUIDs and filesystem alignment guarantees that both SSDs remain synchronized and bootable after repeated cloning or rsync operations, preserving system reliability. - Performance Optimization

The use of thenoneI/O scheduler (ideal for NVMe drives) and the activation of thefstrim.timerservice ensure optimal SSD throughput and longevity. Automatic TRIM operations keep unused cells clean and maintain write performance over time.

These improvements not only extend the life of the hardware but also minimize the operational burden on the user.

The result is a resilient, automated, and high-performance Ubuntu environment capable of sustaining both day-to-day workloads and disaster-recovery scenarios with minimal downtime.

Author Biography

“I am an experienced Network and Cloud Administrator, specializing in the design and operation of secure, high-availability infrastructures. With professional certifications in Cisco Switching and Routing (CCNA, CCNP) and Check Point Firewall Administration (CCSA), I bring in-depth expertise in network security and SAP connectivity solutions for customers and partners over dedicated VPN tunnels.

I am skilled in managing hybrid cloud environments, firewall policies, routing technologies, and SAP network infrastructure. I have developed strong analytical skills in troubleshooting and supporting organizational IT growth on a global scale. I am currently employed by a leading Japanese multinational in the IT and telecommunications industry.”